TLDR: Dimension tables change over time. SCDs are how you decide to handle those changes in your data pipeline. SCD type 1 overwrites values, type 2 tracks history, and dbt has native support for type 2 via dbt snapshot. This blog covers types 1-4, but most focuses more detailed on type 2.

In my last blog post, I showed what a minimal dbt project looks like. Let's now jump into how a specific data engineering concept is commonly implemented in dbt.

Ralph Kimball's dimensional modeling paradigm differentiates 2 main table types, fact tables and dimension tables. In general, fact tables contain events happening over time (likely to have a date or timestamp) while dimension tables provide context around those facts (like customer names, product categories, store locations, etc.). This way very long fact tables can be queried efficiently in the database, and the analyst can later just join exactly those additional fields from the dimension tables that are really needed.

This process (pulling repetitive attributes into their own dedicated tables, then referencing them via keys) is called normalization. But this means that when something changes in a dimension table (say a customer moves to a new city or a product gets reclassified), that single change silently alters the context of every fact record that references it. So there's a need to think about how these changes are supposed to be handled in the data. Do you overwrite and lose the old value? Keep both? This is the problem Slowly Changing Dimensions (SCDs) address.

SCD Types

SCDs come in different types, handling how changes are handled or tracked in the data warehouse differently. They all have their use case:

| Type | What happens on change | History? | When to use |

|---|---|---|---|

| 1 | Overwrite the old value | None | Current state is all you need |

| 2 | Insert a new row, close the old one (valid_to, end_date, is_current) |

Full | You need to know what things looked like at any point in time |

| 3 | Add a previous_value column |

One level back | Rare, transition periods |

| 4 | Separate current + history tables | Full (split) | Performance-sensitive dashboards |

SCD types 0 (just never update anything) or 5 and 6 (hybrids of other types) also exist, but are more niche than the others. SCD type 1 is just a simple overwrite. Type 2 is the most widely used SCD type, and it actually needs implementation effort. So how to implement types 1 & 2 in dbt?

SCD type 1: Plain overwrite

This is the simplest approach: Some attribute changes, you'd like to update it, and you don't ever need the old version again. It's useful for corrections (fixing a typo in a customer name), attributes where the old value is meaningless (current email address) or when storage, costs or complexity matter more than historical accuracy. In dbt, you can achieve that with an incremental model using the merge strategy:

So in this example, on each run, dbt will

- ignore any rows not newer than the target table's last update (

updated_atnewer than latest knownupdated_at) - match incoming rows on

customer_idand overwrite existing values with the new data

It's clean, efficient on storage (1 row per entity) and works on both Snowflake and BigQuery out of the box. No history is kept though. And no rows will ever be deleted based on incoming data. Using {{ this }} means a model can reference itself without triggering a circular reference check.

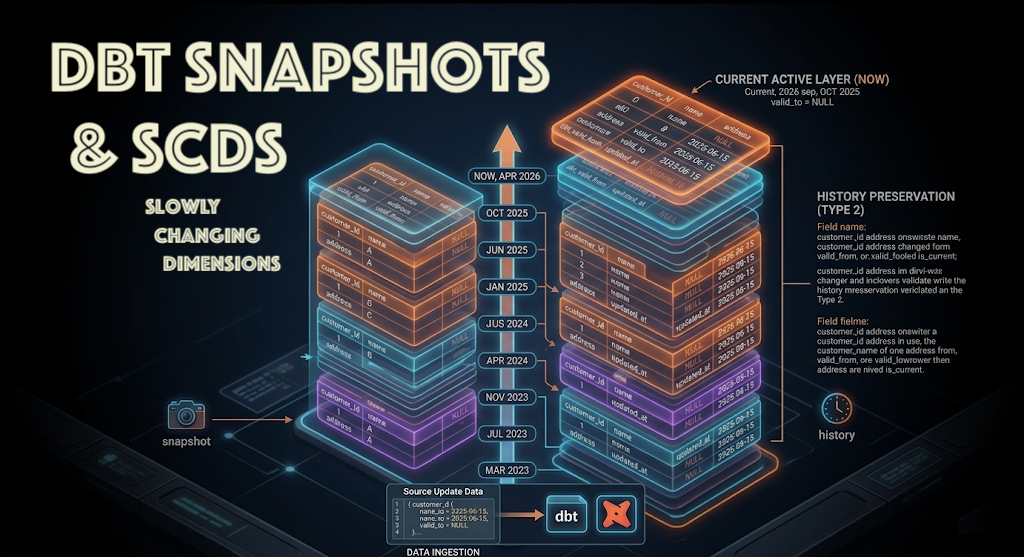

SCD type 2: Track full history with dbt snapshots

No history means you won't be able to answer questions like "what was this customer’s address in January?” though. With an SCD type 2 architecture, you could. SCD type 2 preserves history by adding rows and setting flags: When an attribute changes, the current row gets 'closed' (via end_date/valid_to dates, or also an is_current flag). A new row is inserted with the updated value. Both versions are kept. This way you get full history and know what the dimension looked like at any point in time.

Useful for anything where historical context matters for analysis. Category changes, past product prices, subscription plan changes, etc. In general, if a fact table references this dimension column and the answer depends on when the fact happened, Type 2 will deliver.

dbt has SCD type 2 functionality baked in, with snapshots, that can be run before dbt run in production. Snapshots are .sql or .yml files in the snapshots/ directory, are not models, and are triggered with dbt snapshot instead of dbt run:

The snapshot table reads from the source and writes to its own customer_snapshot table in the snapshots schema (see target_schema) that maintains its own state. So, when you now run dbt snapshot, dbt compares the two tables by matching on customer_id and checking if updated_at has changed. For each row where something changed (e.g. address or department), it sets dbt_valid_to on the existing row to the incoming row's updated_at value, and inserts a new row with dbt_valid_from (same updated_at value) and dbt_valid_to = NULL.

Instead of the source table's one per customer, the resulting snapshot table now has one row per version of each customer, preserving the full history of changes over time (as multiple rows per customer). On top of the new fields dbt_valid_to and dbt_valid_from, it also adds the fields dbt_scd_id (unique surrogate key, e.g. combining customer_id & updated_at) and dbt_updated_at (keeps track of snapshot runs).

What else is configured here?

unique_key- the field that incoming & existing rows are matched on (same with SCD type 1's merge)updated_at- tells dbt which column to use for timestamp comparisoninvalidate_hard_deletes- if a customer disappears from the source entirely, dbt will close their latest row instead of leaving it as current (falsely)

Change detection strategies (strategy keyword)

- timestamp: compares the updated_at column. If the timestamp is newer, the row has changed. More efficient, but requires a reliable timestamp in the source.

- check: compares column values directly (check_cols='all' or a specific list). Changes are detected even without a timestamp, but more expensive because it compares every value row by row.

As a rule of thumb, pick timestamp when you trust your source’s updated_at. Pick check when you don’t have one, or when the source is a flat file dump with no change data capture at all (i.e. a daily overwritten file that only contains the current state, with no change metadata).

So how to query a customer's past state?

Querying point-in-time state:

This is the downside of SCD type 2's. Because multiple versions (= states over time) now exist, you need to be explicit when querying. To get the state of a customer as of a specific date:

And to get only the current state (for dashboards that don’t need history):

Daily snapshots as a simpler type 2 alternative

To avoid the characteristic WHERE dbt_valid_to IS NULL and versioning headache from a downstream/querying perspective, you could also decide to just capture daily snapshots without matching anything or adding extra rows. This will dump the entire dimension table into a new date partition every day:

This way you'd get the same benefit as type 2 (ability to ask “what did the catalog look like last Tuesday?”), while avoiding the change-detection logic completely. The tradeoff is storage: you’re keeping daily copies of every unchanged row. Cheap for a product catalog with 50K rows, adding up for a table with hundreds of millions of rows.

When to prefer this over proper SCD2

- The dimension is small (< millions of rows)

- The source has no reliable

updated_attimestamp - Fact tables are all date-partitioned (

fact.dt = dim.snapshot_dateas join pattern) - You value simplicity over storage efficiency

When to prefer proper SCD2

- The dimension is large and changes are sparse

- You need to track which attributes changed and when

- Downstream models need valid_from / valid_to fields anyway

SCD types 3 & 4: Brief mentions

Type 3 adds a previous_value column instead of a new row, keeping one level of history only. It might be useful during reorganizations (old region vs. new region for a transition period for exampl), but in practice you can always derive this from a Type 2 table with a LAG() window function.

Type 4 splits the current state and the history into two separate physical tables. The main dimension stays lean (always type 1, fast joins), while a separate history table captures every change. Useful when dashboard performance is more critical for the current-state table for example, and you want to avoid the WHERE dbt_valid_to IS NULL filter everywhere. In dbt, the cleanest type 4 implementation might just be a snapshot for history plus a view on top filtering to current rows.

So which one to pick?

In dbt, the decision might often come down to something like this:

Do you need history?

No → Type 1 (incremental merge). Done.

Yes, and the dimension is reasonably sized, with a good updated_at? → dbt snapshot (Type 2).

Yes, but the source is messy & small, and you’d rather not deal with change detection? → Daily snapshots.

Overall, dbt enables you to avoid complex stored procedures or hand-managed surrogate keys (e.g. a hash of customer_id and valid_from). A config block in a snapshot file abstracts this away. You just define what to track and how to detect changes. You could make the argument that an SCD type 2 went from “expensive to maintain” to “why wouldn’t you, at least for dimensions where history matters?”